Data Silos: Are They Really a Problem?

Data silos happen naturally for many reasons. As an organization grows and their security maturity evolves, they’ll likely end up with one or more of these scenarios.

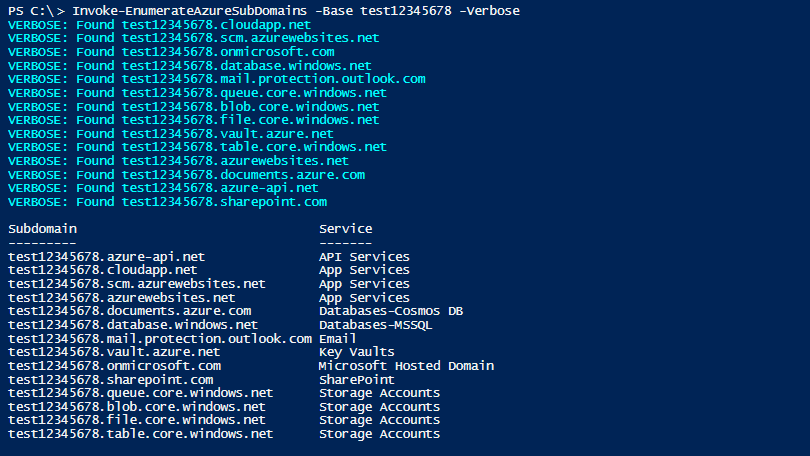

Using multiple security testing scanners: As the security landscape evolves, so does the need for security testing tools, including SAST and DAST/IAST tools, network perimeter tools, internal or third-party penetration testing, and adversarial attack simulation. Companies that were once functioning with one SAST, DAST and network tool each will begin to add others to the toolkit, possibly along with additional pentesting companies and ticketing and/or GRC platforms.

Tracking remediation across multiple tools: One business unit’s development team could be on a single instance of JIRA, for example, while another business unit is using a separate instance, or even using a completely different ticketing system.

What Problems Do Data Silos Create in a Security Testing Environment?

Data silos can create several problems in a security testing environment. Two common challenges we see are duplicate vulnerabilities and false positives.

Let’s take a look at each one:

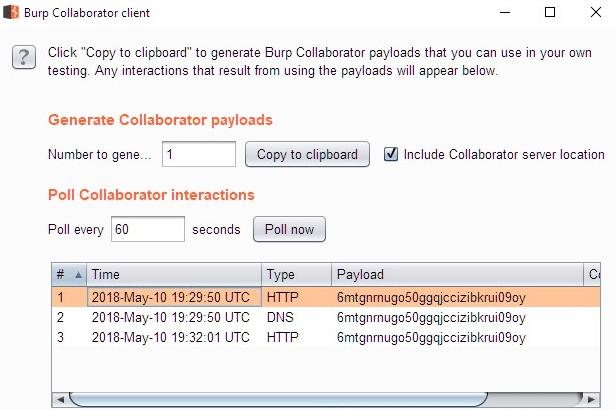

Duplicate vulnerabilities: This happens so easily. You’re using a SAST and a DAST tool for scanners. Your SAST and DAST tools both report an XSS vulnerability on the same asset, so your team receives multiples tickets for the same issue. Or, let’s say you run a perimeter scan and PCI penetration test on the same IP range as your vulnerability management team. Both report the same missing patch, and your organization receives duplicate tickets for remediation. If this only happened once, no big deal. But when scaled to multiple sites and thousands of vulnerabilities identified, duplicate vulnerabilities create significant excess labor for already busy remediation teams. The result: contention across departments and slower remediation.

False positives: False positives create extra work, can cause teams to feel they’re chasing ghosts, and reduce confidence in security testing reports. Couple them with duplicate vulnerabilities, and the problems multiply. For example, say your security team reports a vulnerability from their SAST tool. The development team researches it and provides verification information as to why this vulnerability is a false positive. The security team marks it as a false positive, and everyone moves on. Then your security team runs their DAST tool. The same vulnerability is found and reported to the development team who then does the same research and provides the same information as to why this same vulnerability is still a false positive. Now you have extra work as well as the possibility of animosity between security and development teams.

Why Do These Problems Happen—And How Can You Stop It?

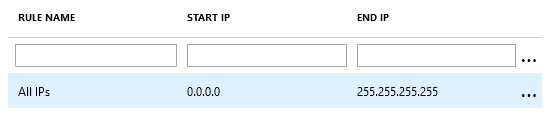

The answer that many security scanners offer is a walled garden solution, or closed platforms. In other words, these security tools cannot ingest vulnerabilities outside of their solution suite. This approach may benefit the security solution vendor, but it hamstrings your security teams. Organizations reliant on these platforms are unable to select among best-in-breed security tools for specific purposes, or they risk losing a single, coherent view of their vulnerabilities enterprise wide.

NetSPI recommends finding a vulnerability orchestration platform provider that can ensure choice while still delivering a single source of record for all vulnerabilities. Using a platform that can automatically aggregate, normalize, correlate and prioritize vulnerabilities allows organizations to retain the agility to test emerging technologies using commercially owned, open source, or even home-grown security tools. Not only will this minimize the challenges caused by data silos, but it can allow security teams to get more testing done, more quickly.

When we built NetSPI Resolve™, our own vulnerability orchestration platform, we built it to eliminate walled gardens. The development of the platform began almost twenty years ago and is the first commercially available security testing automation and vulnerability correlation software platform that empowers you to reduce the time required to identify and remediate vulnerabilities. As a technology-enabled service provider, we didn’t want to limit our testers to specific tools. NetSPI Resolve empowers our testers to choose the best tools and technology. More than that, because NetSPI Resolve can ingest and integrate data from multiple tools, it also provides our testers with comprehensive, automated reporting, ticketing, and SLA management. By reducing or eliminating the time for these kinds of tasks,

NetSPI Resolve allowed testers to do what they do best – test.

Data silos aren’t inevitable, but they are common. Knocking them down will go a long way towards reducing your organization’s cybersecurity risk posture by decreasing your overall time to remediate.

Learn more about vulnerability orchestration and NetSPI Resolve: